What is Kubernetes

Container Orchestration - What is Kubernetes? This blog post is for you if you want to learn more about container technology and Kubernetes.

What do you need container orchestration for, what does “Cloud Native”, “Devops”, “CICD”, “Infrastructure as Code” mean and what is a container anyway. This post is intended to give you an insight into these technologies. Container Orchestration – What is kubernetes

Inhalt

- Container Technology (Docker)

- Cloud Native – Modern Application Architecture

- Container Orchestration, Kubernetes

- Devops, CICD

Container Technologie (Docker)

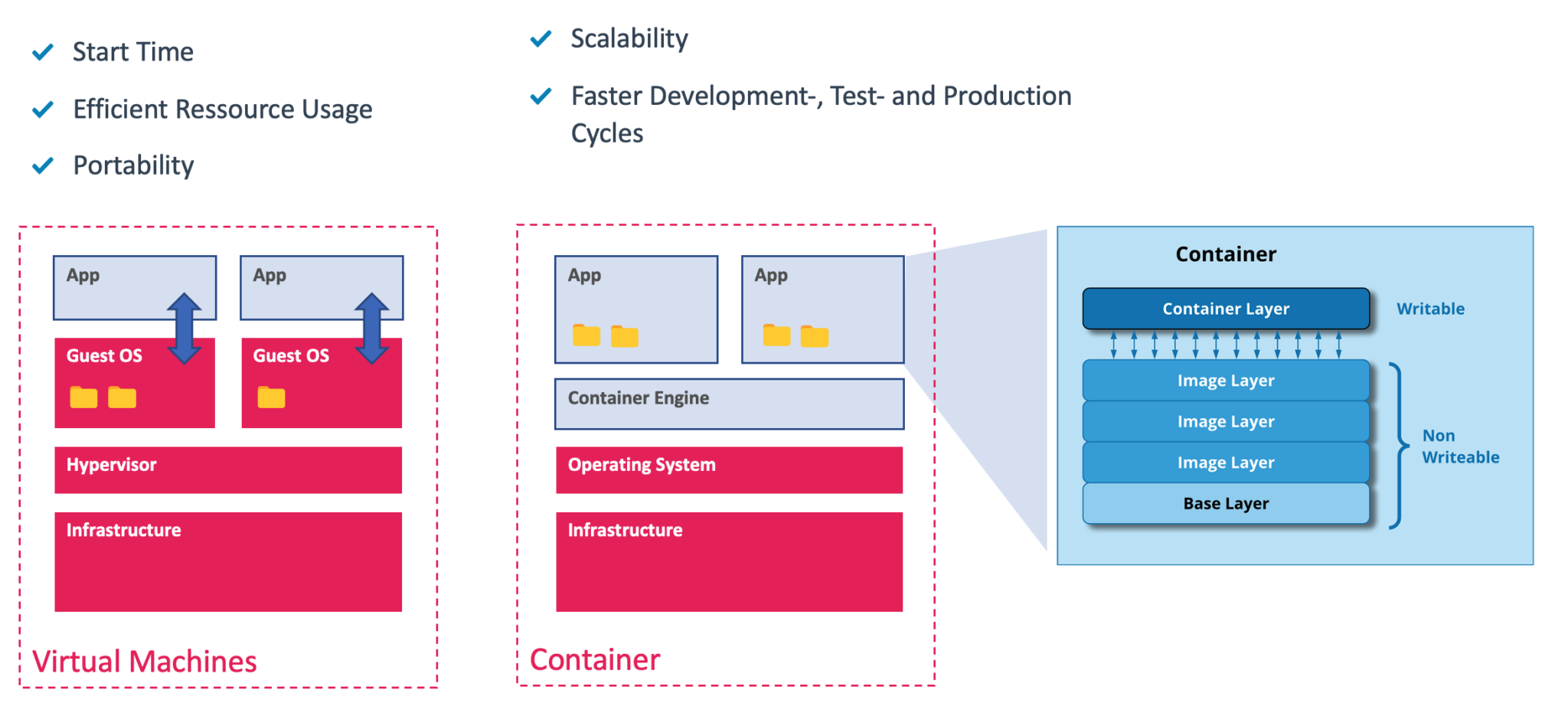

Around the turn of the millennium, we in IT were still mainly dealing with physical servers. The star of virtual machines and companies like VMware rose and the advantages of using virtual machines in contrast to physical servers were obvious. Things are very different now, and workload virtualization is more advanced.

Legacy software applications presented us with the challenge that there were almost always dependencies on the guest OS. It often happened that the application worked correctly on one system, but not on another system. This is due to different patch levels, libraries or other differences on the systems.

Containers offer numerous advantages here. They use the kernel of the running host system and can thus be started very quickly. They use a layer-based file system so that all application dependencies are combined in one container. This makes containerized applications very portable. Container images can be stored in web-based repositories. The largest public repository is Dockerhub.

Docker is the most well-known container engine, although there are others. Containerd, CRI-O, runc to name a few.

Cloud Native – Modern Application Architecture

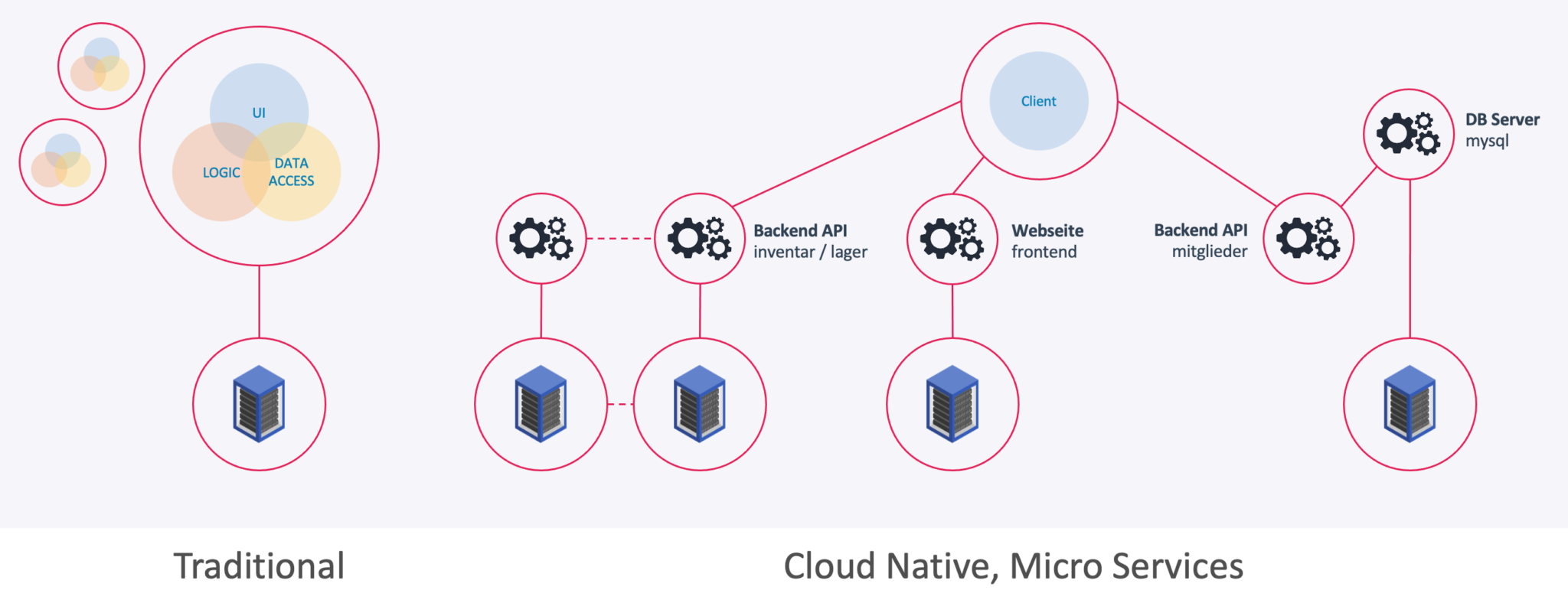

Legacy applications are still very common today. These have a monolithic structure and are usually installed on the client itself. Sometimes a database is used on a physical server or similar. This creates two main problems:

- A dependency of the client application/version to the server/database

- A heterogeneous install base. Do all clients have the same version of the software installed?

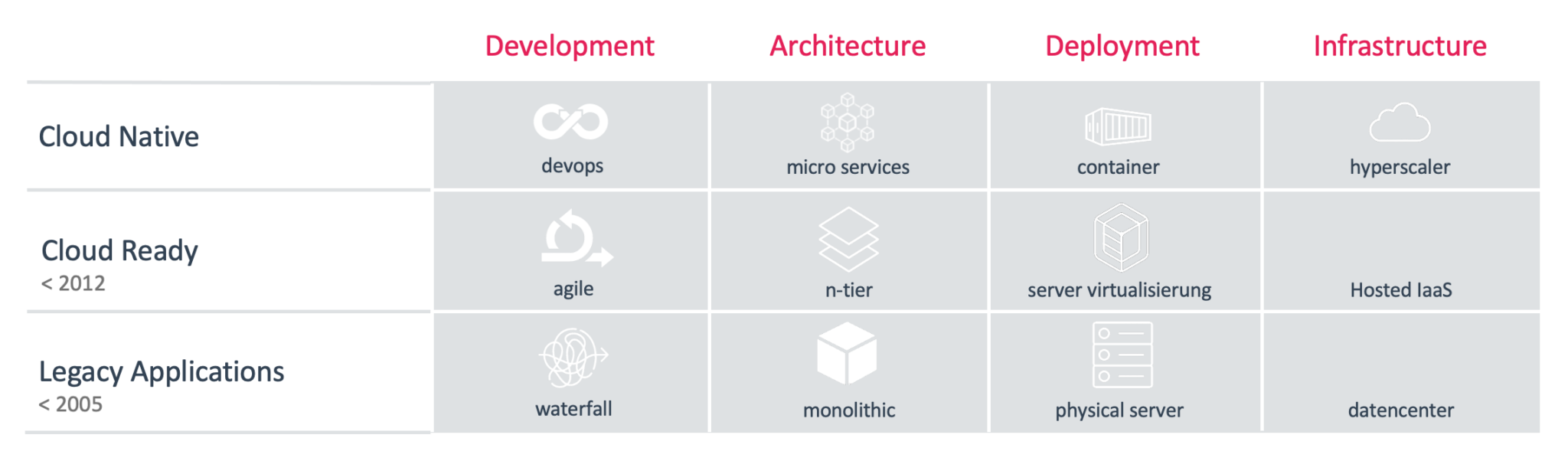

This results in very long development cycles (sometimes 1 release per six months). The development of the software can only proceed slowly, since consideration must be given to the software versions that are already in circulation. Sometimes “big bang” migrations have to be carried out, due to the dependency between client and server versions.

Modern “cloud native” applications have been specially developed for the cloud. Software development companies that follow this approach can work with much faster development cycles (e.g. 100 releases per day) and thus react to customer needs much faster.

The modern approach relies on microservices. The application is thus divided into smaller components (separation of concerns), which are mostly hosted in containers on the server. The frontend (i.e. the graphical user interface) is also hosted as a container on the server and can be run by the client in the browser.

So you can see that the entire application is hosted on the server side. This results in a home-based install base and development can focus entirely on the latest software release.

In addition, the containers could be scaled very well by simply starting multiple instances and connecting a load balancer.

Container Orchestration, Kubernetes

So why do you need a container orchestration like Kubernetes?

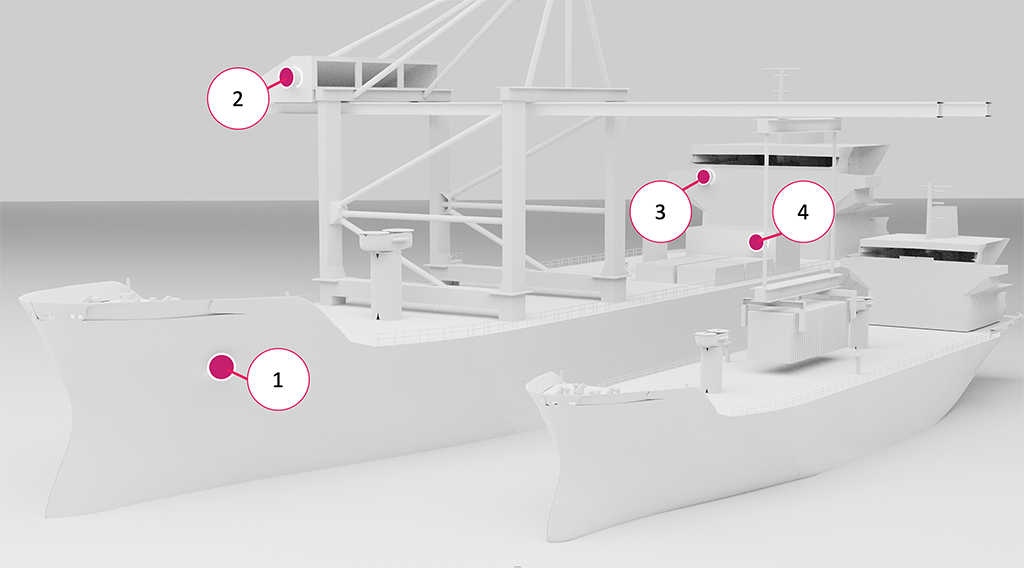

Your physical infrastructure / servers carry the workload (containers – or “pods” in the case of Kubernetes). So think of the servers as container ships onto which the containers (pods) are distributed. The job of Kubernetes is container orchestration.

- Which container should be started on which host (ship / worker node). Which host (ship / worker node) can and should carry which load. How should the load be shared?

- Should the same container possibly be loaded directly onto several ships in case one sinks.

- Monitoring of running containers. If a container crashes, a new container must be deployed automatically.

- If a host (ship / worker node) disappears, new containers must be automatically re-provisioned on another ship.

All of the tasks described are tasks of a container orchestration / Kubernetes. So you can think of Kubernetes as a master ship (master node). Simplified, it consists of the following components

- ETCD

A high-availability key value store that keeps records of all containers (pods), ships (hosts / worker nodes) and the current status of the entire fleet. - Kube Scheduler

The Kube Scheduler decides which container should be loaded onto which ship. - Kube API servers

The entire communication of all components runs via the Kube API server. - Kube Controller Manager

The Controller Manager monitors the status of all containers on all ships and notices when a ship has sunk or a container has gone missing. This is then communicated via the API server so that the scheduler can load new containers if necessary.

So you can instruct Kubernetes to run containers on your infrastructure. You can determine exactly how many container instances should be running on which hosts at any time. Depending on the volume of inquiries, these parameters can even be adjusted automatically.

Devops, CI/CD

In Kubernetes, all resources are defined in an ETCD store in the form of code. Thus, a pure “Infrastructure as Code” approach is pursued here. You can read more about the Agile and Devops philosophy in our blog post.

CI/CD pipelines (continuous integration, continuous delivery) come in many different forms. Some companies use a “DevSecOps approach” and add various security-related functions to the CI stage, such as checking the code for passwords or checking the libraries used for known vulnerabilities.

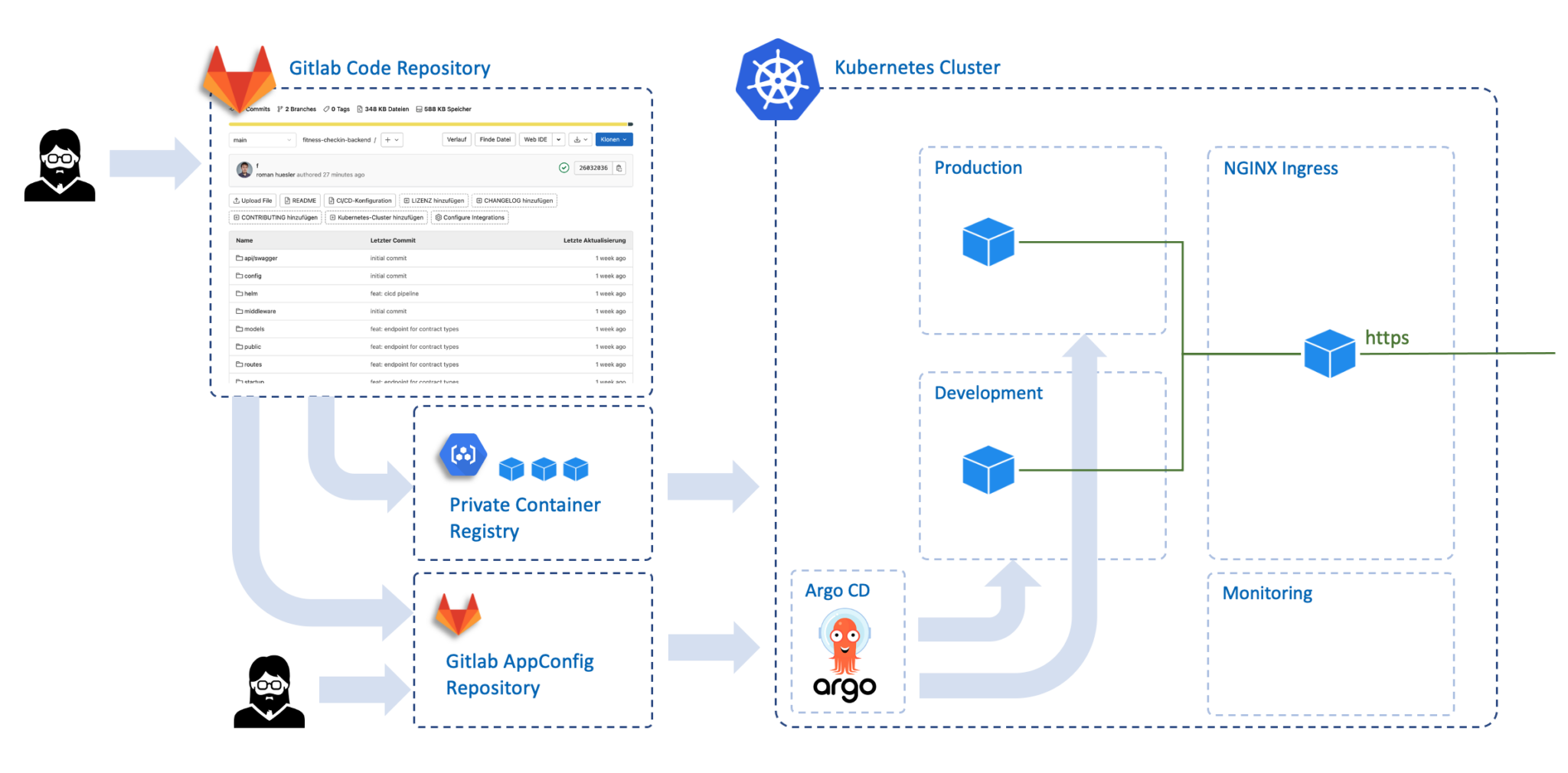

We have chosen the graphic above as an example because the demarcation between CI and CD can be seen very clearly in this example pipeline.

- CI (Continuous Integration)

A software developer works on the software application and uploads new code to the Gitlab repository. A new version number is automatically generated, a container image is generated and uploaded to a container registry. - CD (Continuous Delivery)

Now the new version of the container needs to be started in Kubernetes. Argo notices that a new version of the container is available. It tells the Kubernetes API Server to stop the instances of the old container version and load the new version from the container registry. You can use a “rolling update” strategy, for example. Not all running containers may then be stopped at once, but the change proceeds gradually and is canceled if there is a problem with the new container.

Conclusion

I hope we were able to explain the general function and task of container orchestration with this blog post. You can also see why much faster development cycles are possible when using this technology than with older technologies and application architectures.

opensight.ch – roman huesler